Red/Black gambling game

I heard a puzzle the other day. At first it seemed pretty trivial, but the more I thought about it, the more interesting it became. It involves a deck of regular playing cards.

The puzzle is based around a gambling game. A deck of cards is shuffled then placed face down on a table. The cards are turned over one-by-one.

|

Rules: If a black card is turned up, you win $1.00 If a red card is turned up, you lose $1.00 |

|

You start the game with no money, and a running total is kept. You can leave the game and walk away with your cumulative winnings at any time. The question is, how much would you pay to play this game? Another way to say this, is what is the Expected outcome of the game? (Meaning if the game is played optimally many, many times, how much could you expect, on average, to win in the long run per game?) Of course, to play the game optimally, we need to know what the optimal stopping strategy is. When should we quit and ‘bank’ our earnings? Quit too soon, and we might potentially leave money to be earned in the not seen cards as there might be long runs with a high saturation of black cards in the unseen portion. |

|

Common Sense

If you think about this for a little while, a couple of interesting things come to light:

It’s impossible to lose money in this game! There are an equal number of black cards and red cards. Even if things are going badly, you know that the last card to be turned up will net out the running count to zero.

If things are initially going well, and you start by drawing a good percentage of black cards to build a strong positive score, the concentration of black cards left decreases making it harder to improve your score.

Conversely, if you get off to a poor start, every bad card you draw increases the concentration of good cards left in the deck.

Before you turn over the last card, you will know what it will be. You will always know this. (Potentially you will know what other cards are before the end are if you exhaust all of one color).

The optimal stopping rule can’t be just one value (such as stop when you get to $x.00). It has to be function of what cards we’ve already seen. (As soon as even one card is turned up, it adjusts the probabilities of those left, so our strategy needs to be a function of our current score, and the number of each kind of card left).

Our current score is directly related to what cards are left. If there are 20 red cards, and 17 black cards left, we know our current score must be $3.00 (Because we must have already drawn nine black cards +$9.00 and six red cards −$6.00)

We can use this above fact to determine our optimal stopping strategy. After each card is drawn we know the current balance we have. All we need to do it is work out the expected value for the deck that is left. If the expected value of cards to come is lower than our current score, we stop. If the expected value is higher than our current balance, it’s better to continue drawing. Sure, we don’t know what the next card will be, we just know the probabilities. At each turn of the card we reassess whether we currently have more money than we’d earn (on average) if we continued.

|

|

|

Divide and Conquer

|

This is one of those great problems that we can solve with a recursive approach. Let's look a positions close to the end of the game, solve these problems, then see how we can apply this knowledge to situations earlier in the game. |

One card left

Imagine there is just one card left. We've turned over all the other cards. Since we've seen all but one card we know what the unturned up card is going to be.

|

If the unturned card is black, this means that we must have already turned over 26 red cards and 25 black cards. This means that our running score must currently be −$1.00 (+25−26). We therefore should turn over this card as it will get our running total to zero and improve our position. The Expected value of this position is zero. If we play with optimal strategy, which is the take this last card, the best we can finish the game with is zero dollars. |

|

If the unturned card is red, this means that we must have already turned over 26 black cards and 25 red cards. This means that our running must currently be +$1.00 (+26−25) Turning over the last card will guarantee to hurt our score. We should stop at this point and we can be sure of keeping the $1.00 balance. The Expected value of this position is $1.00 |

|

If there is one black card left, the expected score is Zero

If there is one red card left, the expected score is $1.00

Zero of any one type left

|

We can extend this logic with some careful thinking. If there is ever a time when there are zero red cards left (irrespective of the number of black cards), then the expected outcome of the game is zero! If there are no red cards left, it means we've already turned over every red card. We've taken a hit for every red card already. The best we can do is to turn over all the remaining cards (all of which are black). This will get us back to a score of zero on the last card. This is the best we can do. The expected outcome of this situation is zero. |

|

If there are no black cards left, it means that there are no cards left in the deck that can improve our score. Every turn of a card would lose us a dollar. We should stop playing. We also know what our score would be. Our score will be equal to the number or red cards outstanding (the number of unturned cards left in the deck). The expected value of this position is the number of cards left. |

|

If there are two black cards and no red cards left, the expected score is Zero

If there are two red cards and no black cards left, the expected score is $2.00

Generically:

If there are m black cards and no red cards left, the expected score is Zero

If there are n red cards and no black cards left, the expected score is $n

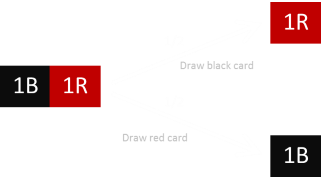

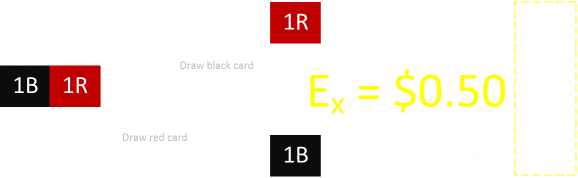

One Black and One Red

|

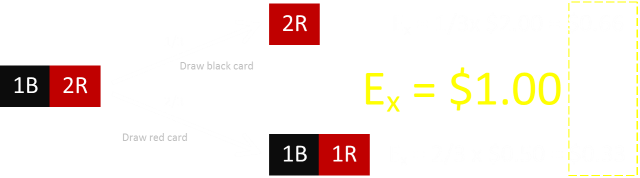

We covered what would happen when there are just two black cards left (no red cards). That would be an expected value of zero. We also covered what would happen where there are two red cards left (no black cards). That would be an expected value of $2.00 If there is one of each card left, there are two possible outcomes. You could draw a black card, or you could draw a red card. There is a 50:50 chance of either event. |

We already know the expected value when there is just one black card left, and when there is just one red card left.

|

To compound the two branches (logical or operation), we add the expected values from each branch. The expected value for One Red and One Black left is, therefore $0.50 |

If there is one red card and one black card, the expected score is $0.50

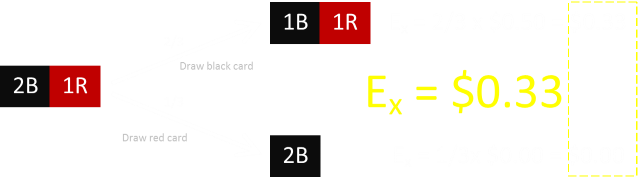

Three cards left

There are various possible ways that three cards can be left. All three of one kind (which we covered earlier), or there could be two of one, and one of another. Below is the diagram for two black and one red:

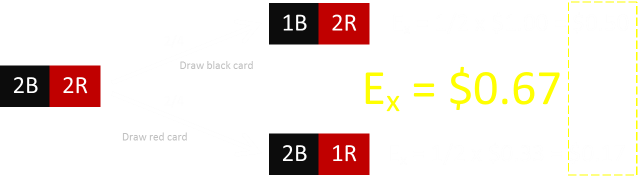

Here is the diagram for two red and one black:

Here is a summary of the results of three cards left:

If there are three black cards and no red cards left, the expected score is Zero

If there are three red cards and no black cards left, the expected score is $3.00

If there are two black cards and one red card, the expected score is $0.33

If there are two red cards and one black cards, the expected score is $1.00

Four Apples up on top*

I'm sure you can see where this is going. Here is an example of the probability tree for the expected value for two red and two black:

*Dr Seuss

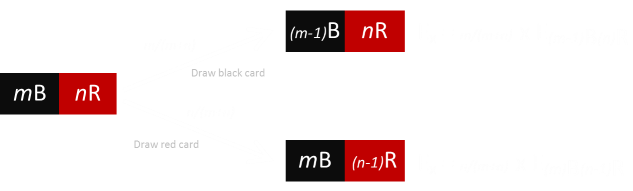

More generically, if there there m black cards left, and n red cards then:

Using this strategy we can calculated the expected value for any combination of red and black cards remaining.

Should I stay or should I go?

Now we have a way to calculate the expected outcome. We also know that the current score at any position is the number of red cards remaining subtract the number of black cards remaining (the inverse of the cards already seen).

If the current score at any position is higher than the expected value that could be achieved from that position going forward, we should stop and take the cash. If the expected value of continuing is higher, then it is in our best interest to continue drawing.

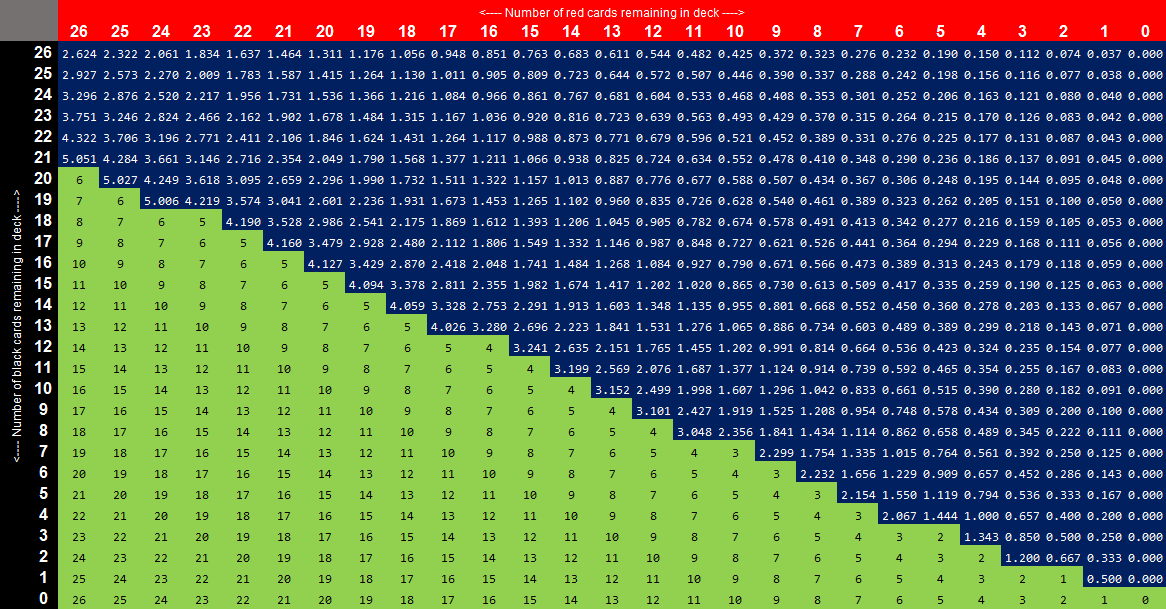

Here is a chart of all these calculations. In the grid, the blue shaded squares show the expected values (when they are are higher than the score at the current position), and the green shaded squares show where the current score is higher:

How to use:

Start in the top left corner.

The math tells us that it is always in our interest to start to play to the game.

The columns represent the number of red cards remaining. The rows the number of black cards.

Draw a card and move either to the right or down the grid, reading the expected value as you go.

As long as you are on a blue square, continue drawing (the number tells you what you might expect to win from this point).

As soon as you move onto a green square, STOP!

Bank the amount of money you have (which is the number in the grid).

Interesting pieces of trivia

|

|

|

|

|

Fixed stopping Rule

We've proved that the optimal (dynamic) stopping strategy results in an expected gain of a little over $2.62

What would happen if, instead of adopting this dynamic strategy, we did apply a static rule of "Stop when we get to $x".

What is the expected outcome? Choose a stopping value too high and, though you win big when it hits, it will not occur very often.

Choose a stopping value too low and, though you'll get that money often, you'll be taking your winning prematurely, with potential earnings still in the deck.

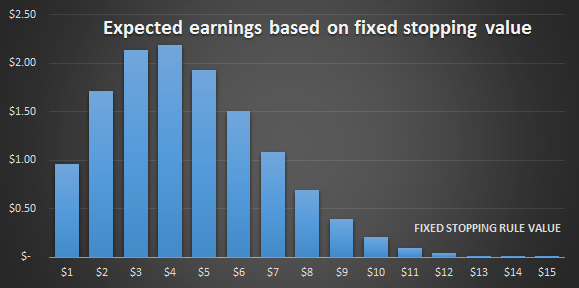

Here is a graph of the expected earnings based on static stopping scores. On the x-axis is plotted the possible stopping scores. e.g. Stop when you obtaining a total of $7 …. On the y-axis is plotted the expected earnings when averaged over a large number of games.

As you can see, with a deck of cards, if you are going to chose one value, the optimal value is to stop when you obtain a score of $4. If you adopt this rule your expected winnings over many games is approx $2.18

This is smaller than adopting the dynamic strategy. (If you changed the rule to stopping at $5, over the long run you'd earn less than $2 per game).

You can find a complete list of all the articles here. Click here to receive email alerts on new articles.

Click here to receive email alerts on new articles.